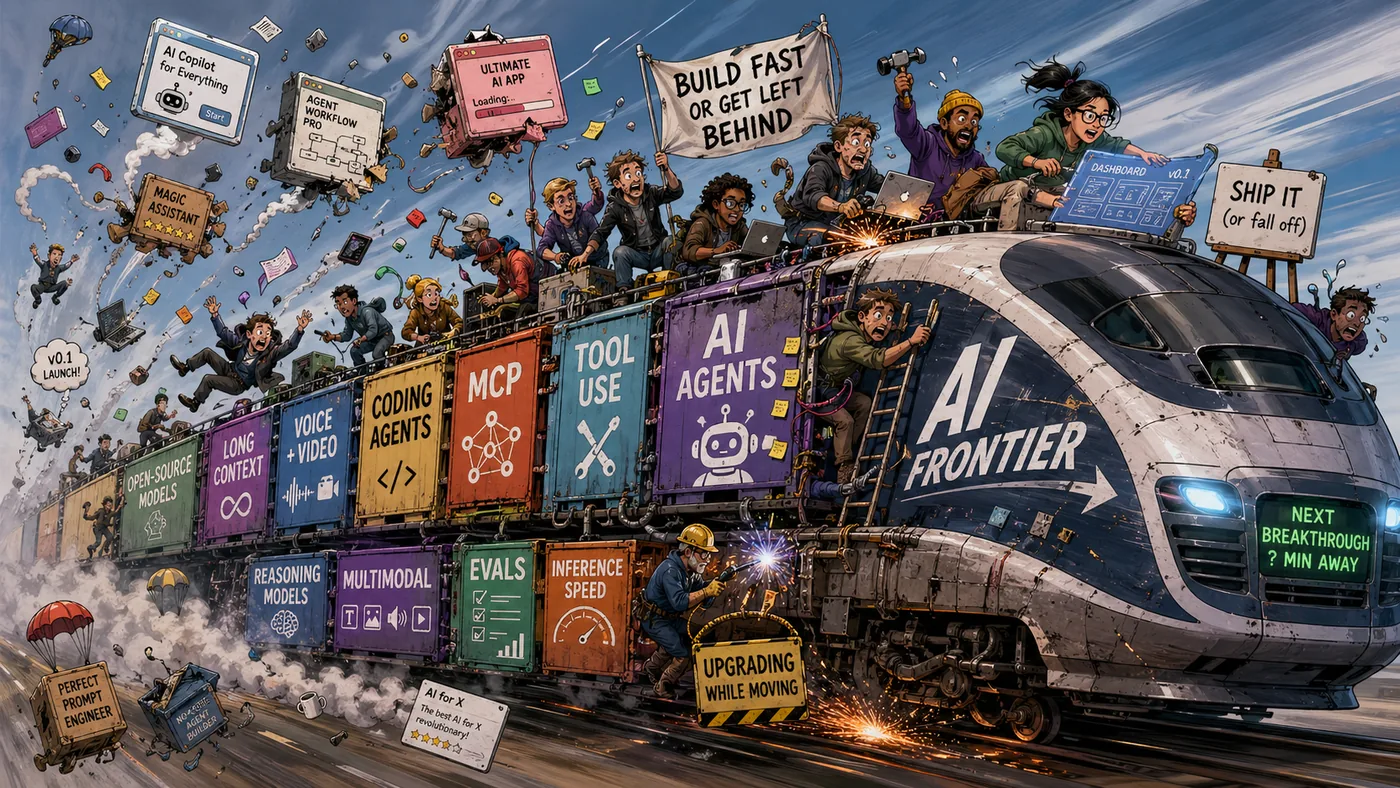

Building on a Moving Train

AI infrastructure feels brittle because every primitive beneath it moves. Here's what I learned building and killing infrastructure in a field that reinvents itself every quarter.

Every developer shipping AI right now has the same complaint: the developer experience is broken. Tutorials are out of date. Libraries have gotchas. Frameworks leak. “Solutions” need 3+ hacks to fit. Every deployment is bespoke.

The primitives underneath AI engineering refuse to hold still. Model APIs, context windows, tool-calling conventions, agent frameworks, “best practices”: every layer moves, and not in lockstep.

Stable primitives let you build durable abstractions:

- SQL has held still for decades. You can build a good ORM on it.

- HTTP doesn’t change every quarter. You can build a good web framework on it.

LLM APIs are still negotiating what tool calls look like, how memory works, what a session is, whether the model controls the loop or you do. You can’t build stable infrastructure on top of that.

Infrastructure needs stable ground. AI engineering doesn’t have any.

You’re not on a platform watching the train go by. You’re on it. The train moves, so do you. By the time you finish solving a problem, the train is somewhere else and so is the problem.

I learned this by killing my own pet product.

Starcite was a small thing: 4 months of side-project nights and weekends, a handful of happy beta testers, one startup running it in production. A durable, multi-user event log for agent coordination.

What changed was the category around it. Buyers stopped thinking of multi-agent coordination as a standalone concern. The frameworks they were already using had started to cover it, badly but sufficiently.

The users and I arrived at the same conclusion around the same time: the problem didn’t warrant a separate product anymore. So I shut it down.

Nothing I built was broken. The train had moved on. Once I saw this, I started seeing it everywhere.

The train moves at 1000 mph

Here’s what’s blown past in the last 24 months:

- Context went from 8K tokens to over 1M. Most summarization, eviction, and RAG schemes built for scarce context are over-engineered now. Much of it is obsolete.

- Tool calling went from regex hacks and JSON-mode coaxing to native model features. Scaffolding that was state-of-the-art in 2024 is tech debt in 2026.

- Agent frameworks cycle every six months. LangChain, LlamaIndex, AutoGen, CrewAI, the next wave, the wave after that. Every migration is throwaway work with a different name.

- “Best practices” decay every three months. The pattern you read on Twitter and shipped last quarter is wrong this quarter. By the time a book arrives, the book is a historical document.

Your abstractions don’t get old. They get wrong.

Old abstractions still do what they used to do; you just wish they did more. Wrong abstractions encode an assumption that no longer holds.

A retrieval layer that was smart when context was expensive is pure overhead when context is cheap. A prompt-chaining pattern that worked around missing tool calls becomes ceremony once the model calls tools natively.

The market moves too

It isn’t only the rails that shift. Markets consolidate emotionally, around the first convenient default. Buyers declare a category “solved enough” while the engineering problem stays wide open.

The competition stops being about being right and starts being about being easy. Depth becomes a losing pitch against “good enough, already integrated.” “Badly but sufficiently” beats “correctly but separately”, because buyers are running their own moving-train problem and would rather not adopt another track.

Your abstractions can hold up technically. Your primitives can stay stable. The category can still move away from you, because the market decided the problem wasn’t worth thinking about separately.

You’re building on two tracks at once. Both are moving.

Rewrites are the norm now

Systems are becoming temporary. Traditional software lasts decades. AI infrastructure doesn’t.

I see two patterns over and over:

People who ditched the framework. They tried LangChain, CrewAI, whichever wrapper was ascendant that quarter, and kept hitting the same wall. Upstream breaks. A new model release invalidates an integration. Their agent stops working in ways that are hard to debug because half the system is framework internals.

Eventually they gave up and wrote their own thin orchestration layer against raw model APIs. Not because they wanted to. Because framework churn cost more than rolling their own.

People who forked and layered. They took a framework that almost fit, forked it, patched a few things, special-cased some model behavior, monkey-patched a few methods. Added one more patch. Added a few more. Ended up with a Frankenstein.

Every upgrade is a merge conflict. Every new model is a regression hunt. The fork eventually becomes the product, and nobody remembers which parts are theirs.

Both paths are rational. Stability isn’t coming from the rails, so you either manufacture it yourself or pay forever to keep someone else’s unstable layer running.

Today’s abstractions may not have the lifespan to justify the polish. The old frame, build the right abstraction once, assumes abstractions persist. Many won’t.

Many should be written to be rewritten.

You can’t win

Every bet loses:

- Build for today’s limits and the limits lift. Half your product becomes a workaround for a problem that no longer exists.

- Build for tomorrow’s limits and you’re solving problems no one has yet. You ship ahead of the market and die waiting.

- Build abstractions that encode today’s assumptions and the assumptions break. Your abstractions become traps.

- Build depth into a category the market has emotionally declared solved and you lose to convenience, no matter how right you are.

There’s one move that does win: scope. The frameworks that survive aren’t the ones with the best implementation of one thing. They’re the ones that absorb adjacent categories before someone else does. Memory becomes part of orchestration. Eval becomes part of observability. Observability becomes part of agent platforms.

Langfuse and Arize Phoenix own observability mindshare right now. A few orchestration layers have stuck. But “winner” is a snapshot, not a verdict. Every one of them is one model release or category absorption away from being the next thing people ditch.

That’s why everyone keeps building the same thing. Everyone hits the same gaps: state, observability, evals, coordination. Everyone builds their own layer, because even the winning ones haven’t held still long enough to depend on.

That isn’t duplication out of ignorance. It’s duplication out of necessity.

How to build anyway

The field needs infrastructure. People will keep building it. Some will be useful for a year. Some will die in a quarter. Some will accidentally survive long enough to become load-bearing.

Two rules I’ve come to trust:

Treat rewrites as the default, not the exception. You can’t bolt anything down on a moving train. The roadmap with clean quarterly milestones is fiction. A meaningful fraction of what you ship this year will be rewritten or deleted next year. Treat that as normal cost, not failure.

Then let that flow into how you design:

- Less upfront abstraction.

- Less premature generalization.

- Less scaffolding for extensibility that’ll be irrelevant by the time you need it.

Solve the problem in front of you, cleanly enough that it works, loosely enough that rewriting it later doesn’t feel like grief.

Treat rewrites as accidents and you demoralize yourself. Treat them as scheduled maintenance and you keep shipping, and stop spending craft on durability the environment won’t honor.

Stay close to the models, not the frameworks or the workarounds. Both are extra cars between you and the engine, and both lurch hardest when the engine changes direction. The model API moves slower than the wrappers. What doesn’t depend on the framework survives the next framework cycle.

The model’s weaknesses move too:

- Context grows.

- Tool calling gets cleaner.

- Structured output gets more reliable.

Every elaborate scaffold you build to compensate for a current limitation is a bet that the limitation stays. Usually it doesn’t.

By the time your workaround is battle-tested, the reason it existed is gone. Build for the model in three months, not the one today.

Software engineering taught me to build abstractions that last. AI engineering is teaching me to build abstractions I can afford to lose.

Related: Universal LLM Memory Does Not Exist on why generic memory solutions keep reshaping, LLM Memory Systems Explained for the current landscape, and the Ultimate Guide to LLM Memory for the full picture.